I’m having a lot of fun writing common_schema, an SQL project which includes views, tables and stored routines.

As the project grows (and it’s taking some interesting directions, in my opinion) more dependencies are being introduced, and a change to one routine or view may affect many others. This is why I’ve turned the development on common_schema to be test driven.

Now, just how do you test drive an SQL project?

Well, much like the way you test any other project in your favorite programming language. If its functions you’re testing, that’s all too familiar: functions get some input and provide some output. Hmmm, they might be changing SQL data during that time. With procedures it’s slightly more complex, since they do not directly return output but result sets.

Here’s the testing scheme I use:

- Tests are divided to families. For example, there is a family of tests for the eval() function.

- Each test in a family is responsible for checking the simplest, most “atomic” issue. This means many small tests.

- Each test can have a “pre-test” step, which prepares the ground (for example, create a table and populate it)

- Likewise, a test can have a “post-test” step, which is typically just cleanup code (since the test is already complete by the time the post step is invoked).

- Each test is an SQL file: a set of commands to be executed.

- A test may have an “expected output” file.

- If no explicit expected exists, the test is expected to return “1” (just as the most basic JUnit test assumes an “assert true”)

- A test family may also have pre- and post- steps.

- Any failure in any step fails the entire process. Failures may include:

- Failure to prepare the grounds for a test or family of tests

- Failure in executing the test

- Mismatch between test’s output and expected result.

- Failure in executing the post- step (may indicate yet invalid test result not intercepted by the test)

An example

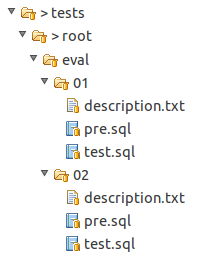

The following image presents a single test family: the eval family, testing the eval() routine.

- In this family, there are two tests.

- In both tests, we have a pre-test step, and a test.

- We have no post-test here.

- Nor do we have an expected-output sample, which means the tests expect to return with “1”.

Implementation

But how are tests conducted? Via mysql, of course. While tests are plain SQL text file, they are being executed against a running MySQL server using the mysql client. It is given the test files as input, and its output is directed to file as well.

This makes it very easy to code the test using a simple shell script. It takes a small measure of file iteration, process invocation, exit code check, and diff execution.

For example, to test eval()‘s 01 test, we first execute mysql with 01/pre.sql as input. Assuming success, we execute mysql again, this time with 01/test.sql. We capture the output of this execution, and compare it with expected-output, or with “1” when no expected-output specified.

Tests pass, or no code!

Some 12 years ago, I worked with a less-known version system called aegis. The thing I remember most from aegis was that it had a good tests infrastructure. Long before “test-driven development” was coined, or was even commonly practiced, aegis supported tests right into your version control. “Right into”, in the sense that you could not merge your code back to the baseline if it didn’t pass all of the tests.

I work with SVN for common_schema, and I do not know of such an option in SVN. But I also use ant to build this project, and the dependency is clear there: ant dist, my target which creates the distribution files, is dependent on ant test, the target which works out the tests.

That is, you cannot generate the distribution files when tests fail.

Further notes

Since I’m now retroactively patching tests for already existing functionality, calling it test-driven development is an overstatement; nevertheless new tests are already proving invaluable when I keep changing and improving existing code. Suddenly dependent functionality no longer works as expected. What fun!

The code for the testing suite is actually much shorter than this blog post.

Why didn’t you use and/or extend the MySQL test suite?

Daniel,

Was good to see you.

I suppose I could have used it. However, it is meant for testing of the MySQL server itself, or added functionality. The common schema is not an internal part of the server.

Another thing is that I don’t quite like the way the results are generated. I like more the approach I took which makes it more JUnit-like.