This is the third post in a series of posts describing our experience in migrating a large DWH server to TokuDB (see 1st and 2nd parts). This post discusses operations; namely ALTER TABLE operations in TokuDB. We ran into quite a few use cases by this time that we can shed light on.

Quick recap: we’ve altered one of out DWH slaves to TokuDB, with the goal of migrating most of out servers, including the master, to TokuDB.

Adding an index

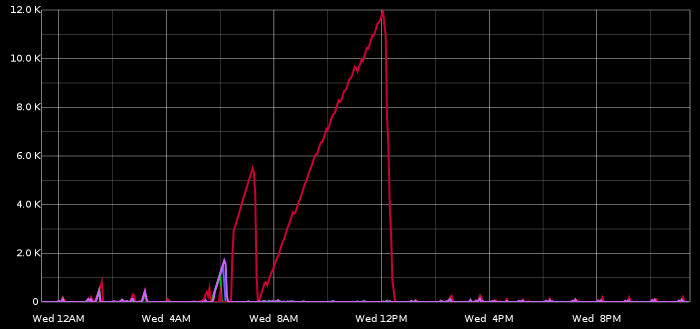

Shortly after migrating our server to TokuDB we noticed an unreasonably disproportionate slave lag on our TokuDB slave (red line in chart below) as compared to other slaves.

Quick investigation led to the fact that, coincidentally, a manual heavy-duty operation was just taking place, which updated some year’s worth of data retroactively. OK, but why so slow on TokuDB? Another quick investigation led to an apples vs. oranges problem: as depicted in part 1, our original setup included MONTHly partitioning on our larger tables, whereas we could not do the same in TokuDB, where we settled for YEARly partitioning.

The heavy-duty operation included a query that was relying on the MONTHly partitioning to do reasonable pruning: a WHERE condition on a date column did the right partition pruning; but where on InnoDB that would filter 1 month’s worth of data, on TokuDB it would filter 1 year.

Wasn’t it suggested that TokuDB has online table operations? I decided to give it a shot, and add a proper index on our date column (I actually created a compound index, but irrelevant).

It took 13 minutes to add an index on a 1GB TokuDB table (approx. 20GB InnoDB uncompressed equivalent):

- The ALTER was non blocking: table was unlocked at that duration

- The client issuing the ALTER was blocked (I thought it would happen completely in the background) — but who cares?

- I would say 13 minutes is fast

Not surprisingly adding the index eliminated the problem altogether.

Modifying a PRIMARY KEY

It was suggested by our DBA that there was a long time standing need to modify our PRIMARY KEY. It was impossible to achieve with our InnoDB setup (not enough disk space for the operation, would take weeks to complete if we did have the disk space). Would it be possible to modify our TokuDB tables? On some of our medium-sized tables we issued an ALTER of the form:

ALTER TABLE my_table DROP PRIMARY KEY, ADD PRIMARY KEY (c1, c2, c3, ...);

Time-wise the operation completed in good time. We did note, however, that the disk space consumed by the new table was doubled. Was it due to the fact we added two columns to our PK? Did that account for the bloated space? I did not believe so, and decided to rebuild the table:

OPTIMIZE TABLE my_table

Nope. Space not reduced. However we were unconvinced and asked. As usual, we got quick response from the Tokutek team; this was a bug: while our original table used the TOKUDB_SMALL row format (high compression), the table rebuild reset it to TOKUDB_FAST (normal compression), which makes for roughly twice the file size. The bug was filed as: alter table operations that rebuild the table lose the original tokudb compression.

Now, we were altering the PRIMARY KEY. We were not expecting an online operation anyhow, and didn’t mind blocking the table; hence the solution was simple: make sure to spceify the row format:

ALTER TABLE my_table DROP PRIMARY KEY, ADD PRIMARY KEY (c1, c2, c3, ...) ENGINE=TokuDB ROW_FORMAT=TOKUDB_SMALL;

This worked in terms of disk space — but we only later realized it would still make us trouble.

Modifying a PRIMARY KEY on our largest table

We moved on to our largest table: originally 1TB InnoDB COMPRESSED, worth of 2TB uncompressed. With TokuDB it went down to 100GB. Converting this table to TokuDB took about 40 hours, which is just fast. We issued an ALTAR TABLE modifying the PRIMARY KEY as above and waited.

The operation did not complete after 40 hours. Nor after 3 days. By day 4 we thought we might look into this. Fortunately, TokuDB is friendly on SHOW PROCESSLIST and provides you with useful information, such as “Fetched about 1234567890 rows, loading data still remains“. Yikes! We extrapolated the values to realize it would take 2 weeks to complete! Weekend went by and we decided to find a better way. Again, posting on the tokudb-user group, we got a definitive answer: a table rebuild does not utilize the bulk loader (you really want to be friends with the bulk loader, it’s the process that loads your data quickly).

And so we chose to KILL the ALTER process and go another way; again, KILLs are very easy with TokuDB ALTER operations: took 3 minutes to abort this week old operation. The alternative operation was:

CREATE TABLE my_table_New LIKE my_table; ALTER TABLE my_table_New DROP PRIMARY KEY, ADD PRIMARY KEY (c1, c2, c3, ...) ENGINE=TokuDB ROW_FORMAT=TOKUDB_SMALL; INSERT INTO my_table_New SELECT * FROM my_table; RENAME TABLE my_table TO my_table_Old, my_table_New TO my_table; DROP TABLE my_table_Old;

The INSERT INTO … SELECT operation does use the bulk loader when you do it on an empty table. It completed within merely 30 hours. Hurrah!

DROPping a TABLE

It was an immediate operation to drop our “Old” table — subsecond. Nothing like your InnoDB DROP.

Hi Shlomi!

I’m wondering how things are going, now that it’s been in production for 6 months or so?

Best Regards

Hi Matt,

As you say, it’s in production; we’re running a mixed topology of TokuDB and InnoDB. We keep those InnoDB servers to be on the safe side.

We have two standing bugs, both relate to locking. In one bug we get frequent “lock wait timeout” upon concurrent writes to same table (even though for different areas). On another bug we get mysterious slowness on LOAD DATA INFILE, inconsistent — just caught one today, and it relates to locks as well.

I should mention we’re still doing YEARly partitioning even though we would really like to do DAYly or MONTHly — due to open files issue mentioned in previous part.

Other than the above we’re good. I haven’t tested OLTP load, just OLAP (low write concurrency, infrequent big reads)