orchestrator 3.0 Pre-Release is now available. Most notable are Raft consensus, SQLite backend support, orchestrator-client no-binary-required client script.

TL;DR

You may now set up high availability for orchestrator via raft consensus, without need to set up high availability for orchestrator‘s backend MySQL servers (such as Galera/InnoDB Cluster). In fact, you can run a orchestrator/raft setup using embedded SQLite backend DB. Read on.

orchestrator still supports the existing shared backend DB paradigm; nothing dramatic changes if you upgrade to 3.0 and do not configure raft.

orchestrator/raft

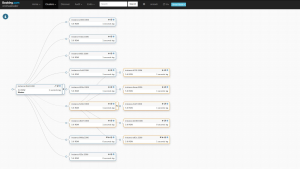

Raft is a consensus protocol, supporting leader election and consensus across a distributed system. In an orchestrator/raft setup orchestrator nodes talk to each other via raft protocol, form consensus and elect a leader. Each orchestrator node has its own dedicated backend database. The backend databases do not speak to each other; only the orchestrator nodes speak to each other.

No MySQL replication setup needed; the backend DBs act as standalone servers. In fact, the backend server doesn’t have to be MySQL, and SQLite is supported. orchestrator now ships with SQLite embedded, no external dependency needed. Continue reading » “orchestrator/raft: Pre-Release 3.0”